However, only pure char level can be hard to correct, and we can’t make a simple char in a token ( you can see why?). For instance, statistical methods can use word level, making a word dictionary of their occurrences, in this case, the words become tokens, and we can combine with n-gram approach to make new tokens, if we want. So, before coding, we have to know what context we want to work. Simple, right? No! What pieces of the text means? And now, we have two approaches: can be words pieces (word level), or characters pieces (char level). Well, Tokens are pieces of our context work, this is, texts. So, I just detail for you, my point of view, since some works just skip over these subjects, or just explain in general, assuming we already know. Just to mention, the explanations here were from what I understood from readings and articles, after all the work, code, and various errors that I went through. However, first is necessary explain what is Tokens and N-grams, since they are common terms in this area. I will only mention a few that I saw and used. It is interesting, but it has many statistical approaches to spelling correction. Then, I’ll try to quickly explain each of them, along with the terms and methods used. Newer approaches already deal with Transformer-XL and Evolved Transformer, but I preferred to go basic and simple in this task. To an expert, these methods are “common” and nothing new. Neural Network: Seq2Seq ( Bahdanau and Luong attention), Transformer.Statistical: Similarity (n-gram), Norvig, Symspell, Kaldi + SRILM.Finally, my goal would be simpler too, unlike the Machine Translation and Grammar Error Correction approaches.Īt the end of a long research and study, I summarized the existing techniques that I would use in the project (there is more, of course), they are: In fact, I had more doubts that I expected, but on the other hand, I knew I would use character-level tokens and HTR metrics (such as Character Error Rate and Word Error Rate), instead word-level tokens and GER metrics (such as Precision and BLEU). So, I saw that Neural Networks have been growing in this area too. In this way, I found this excellent post Deep Spelling and this GitHub repository. What is token? n-gram? filtering? lemmatization? Language Modeling? Where is Kaldi and SRILM? And more later, ? encoders? decoders? attention? multi-head attention? What do you think? The first search that I did, I found some statistical approaches, such as Norvig and SymSpell techniques and several “trivial” doubts. My goal was study and present some alternatives to post processing text in the HTR, because currently in this area of research, the Kaldi toolkit with SRILM is used in HTR output through the statistical approach, thus improving the text recognized by the optical model. Not only does it make sure that the stability of your browser is not compromised but also gives you amazing results for common typos.Įither of the two options are open, They can help you in tackling Typos readily but the later is a safer option out of the two.Beginning with my mission here.

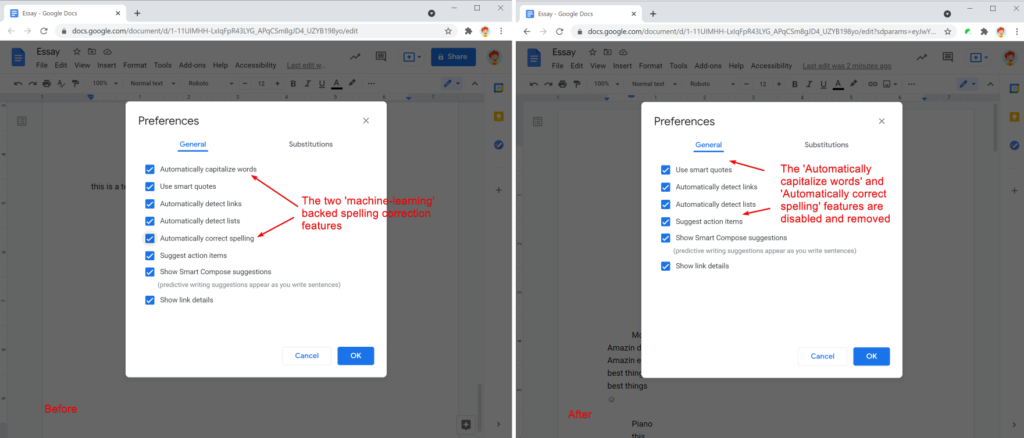

If you want a perfectly working Extension that helps you in tackling the Typos in your Gmail, Facebook status box or your beloved micro blog on Twitter then you can get the AutoCorrect Extension for your browser. Once you have found the option, click on the Enable link and your Chrome browser would help you in checking all the text that you enter.Ī word of caution, flags is an experimental feature and thus can be prone to bugs so if you plan on enabling a flag, do it at your own risk.Įxtensions are a great way to make sure that you have amazing features by your side in your browser. The option is Enable Automatic Spelling Correction. All you need to do is go to “chrome://flags” and search for it. Enable Automatic Spell Check in ChromeĬhrome has an inbuilt function that enables automatic Spell Check. Google Chrome is one of the best browsers the internet has seen so far what if I told you that there is a way, in fact two ways to enable this superb feature on your browser.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed